- #Install apache spark jupyter notebook install#

- #Install apache spark jupyter notebook update#

- #Install apache spark jupyter notebook code#

- #Install apache spark jupyter notebook download#

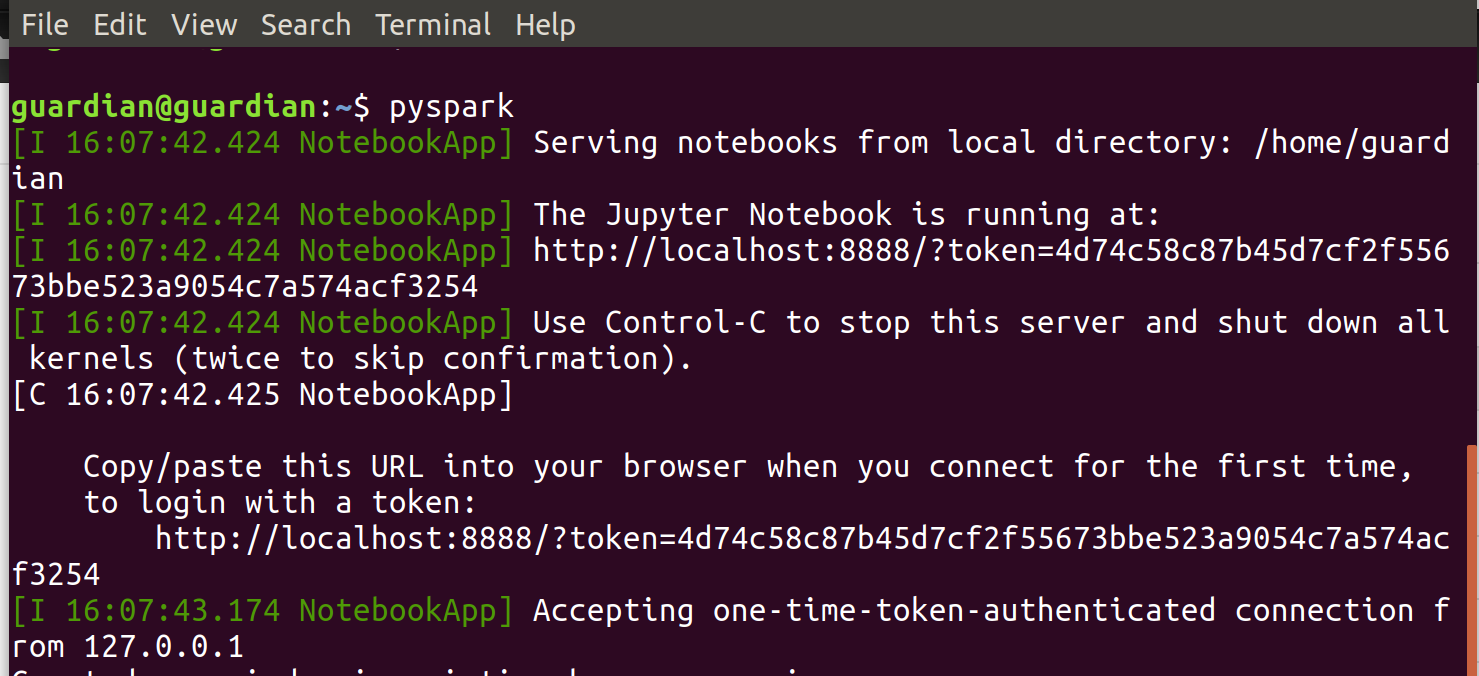

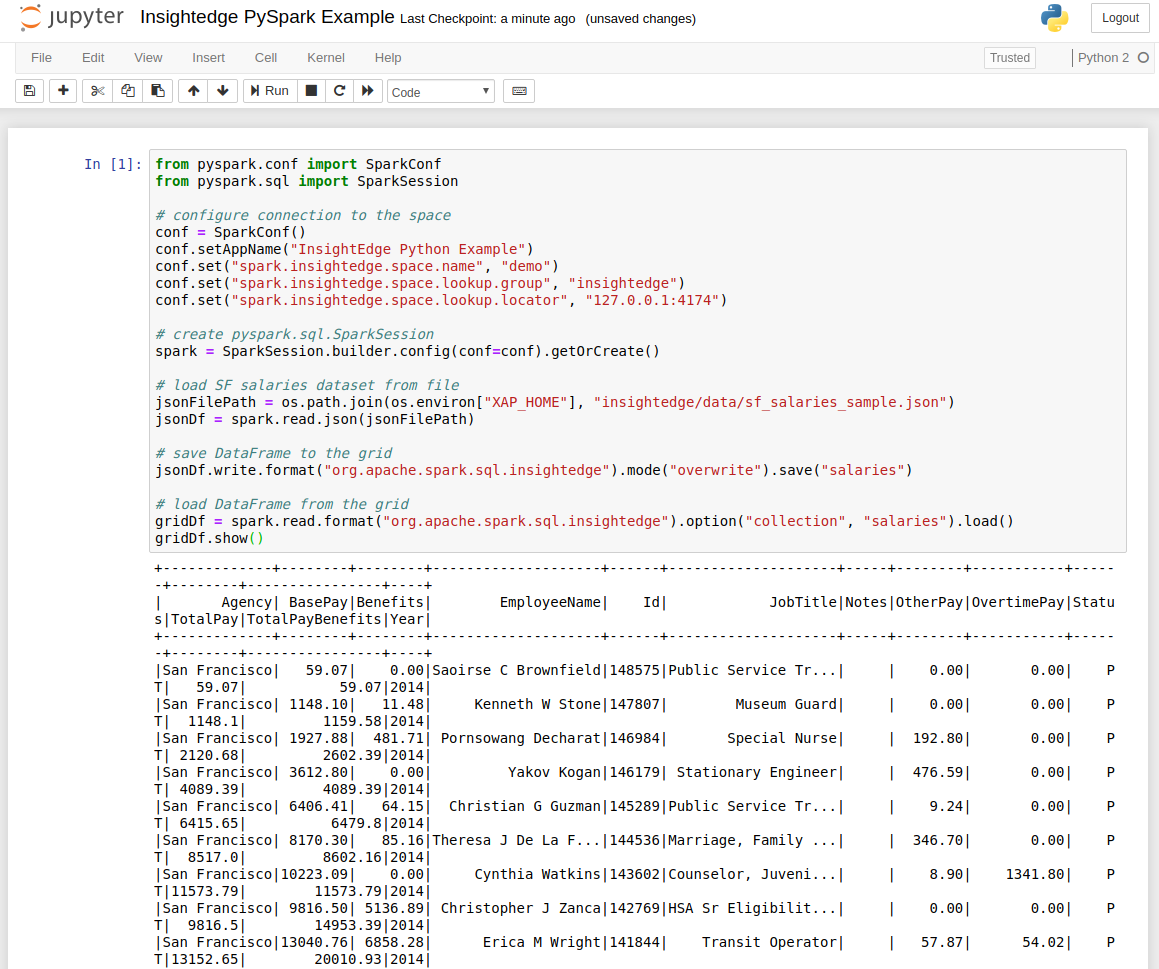

So, the following script is intended to be set in any server that data scientists/engineers use as development environment, which is part of your cloudera cluster. Jupyter notebooks are the current de facto standard for this purpose. The ability to quickly test and analyse your data is essential to develop any decent machine learning algorithm. Congratulations, you have Spark2 setup on you Cloudera cluster.

#Install apache spark jupyter notebook update#

Deploy the Configuration update on clients, and restart stale services.

Gateways are a special type of role that just indicates Cloudera that the respective cluster configurations should be installed and updated. Hdfs dfs -chmod 755 /user/spark/spark2ApplicationHistoryįinally deploy Spark2 gateway role on all hosts you wish to run Spark 2. Hdfs dfs -chown spark:spark /user/spark/spark2ApplicationHistory Make sure permissions are correctly assigned for the respective user. Also note that Spark history server will attempt to write application history into HDFS, by default in this localtion: “/user/spark/spark2ApplicationHistory”.

#Install apache spark jupyter notebook install#

If you already have a Spark History server (for Spark1) on the same host installed, make sure you install it in a non-conflicting port, which will be 18089 instead of the usual 18088. The first and mandatory role you will need to setup is Spark History server. On the service page, click on “Actions” button, and “Add Role Instances”. In cloudera home page you should now already see Spark2 listed among the different services, similarly as follows:Ĭlick on Spark2 service. You should download, activate and distribute the parcel on all hosts of your cluster where you want to setup Spark. In my case, I was using 2.4.0, so the URL is. In parcels configuration, add the respective Spark2 URL. This can be done directly on Cloudera Manager GUI.

#Install apache spark jupyter notebook download#

If all went well, it is time to download Spark2 parcel. Now follow the instructions provided by cloudera to install an add-on service. Put the CSD jar on cloudera local configured location, which by default is /opt/cloudera/csd. CSDs are metadata files that describe a product for use with Cloudera (for more information about CSDs: check here). If everything looks good so far, it is time to familiarize yourself with the installation process. Whenever ready, the first step is to download to your cloudera manager instance/server the respective Custom Service Descriptor (CSD). This is understandable, as default paths could become a mess. Last but not least, do note that, although Cloudera allows you to set Spark1 and Spark2 in parallel on the same cluster, it does not support having multiple Spark2 versions.

This should also help you choosing the Spark 2 version you intend to setup, and make sure all features you required are there. Just to be on the safe side have also a look on the release notes and incompatible changes pages.

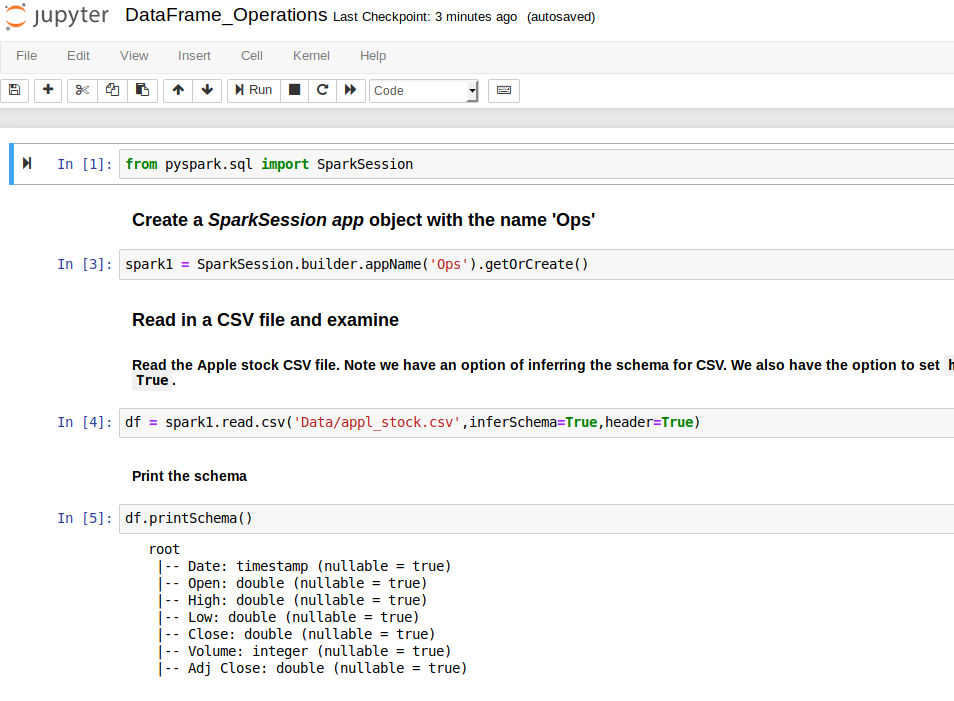

In my case only pyspark was required, so scala was not required to be setup on the cluster. Note that some might be optional, such as scala. First, make sure you meet all requirements before you proceed. It is in these scenarios where vendors such as Cloudera (now merged with HortonWorks) and MapR (now acquired by Hewlett and Packard) have their sweet spot. That is exactly the case of one of the current projects where I am working on, where a volume of 10 TB/day is the minimum norm. Given that this is a very frequent setup in big data environments, thought I would make the life easier for “on-premise engineers”, and, hopefully, speed up things just a little bit. This post summarizes the steps for deploying Apache Spark 2 alongside Spark 1 with Cloudera, and install python jupyter notebooks that can switch between Spark versions via kernels. And in on-premises contexts, the speed of operational change is significantly slower.

But moving into the cloud is not an easy solution for all companies, where data volumes can make such a move prohibitive. Especially if you consider Cloud tech stack evolution/change speed, it has been a long time since Apache Spark version 2 was introduced (26-07-2016, to be more precise).

#Install apache spark jupyter notebook code#

"fatal_error_suggestion": "The code failed because of a fatal error:\n\t.\n\nSome things to try:\na) Make sure Spark has enough available resources for Jupyter to create a Spark context.\nb) Contact your Jupyter administrator to make sure the Spark magics library is configured correctly.\nc) Restart the kernel.Yes, this topic is far from new material. "livy_session_startup_timeout_seconds": 60,